As if 2020 has not been a tough enough year with forest fires, a pandemic, and the tragic deaths of Kobe Bryant and his daughter Gianna, it now also looks as if hundreds of thousands of women may have had fake nude photos of them spread online.

The photos are taken from unsuspecting women’s social media accounts. With the help of artificial intelligence, their clothes are digitally removed from their bodies. Furthermore, they are spread on an app called “Telegram”, according to the BBC.

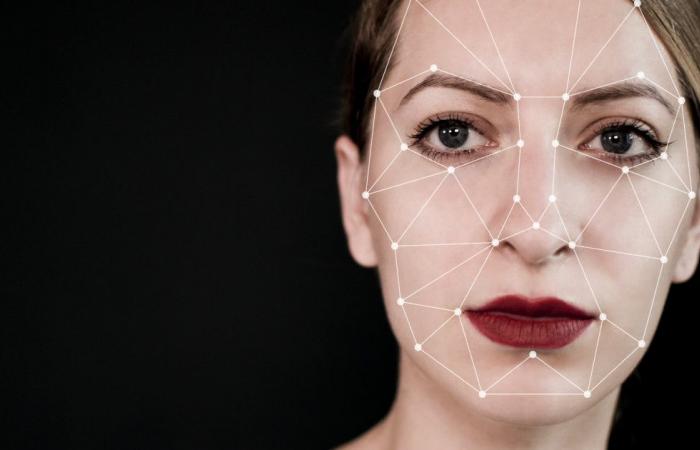

The intelligence company Sensity says that the pictures are made by a “deepfake” robot. “Deepfake” are computer generated images and videos, which make it look like the people pictured are doing or saying things they have never done or said.

In the video below, someone manipulated a video of Donald Trump using “deepfakes”:

view more

In addition to manipulating videos and pictures of politicians, several celebrities and ordinary women have also experienced that pornographic videos are made of them, using “deepfakes”.

By “mounting” their face on the bodies of porn actors, the creators of the videos make it look as if it is the actual person who recorded a porn movie.

Women are manipulated into online porn movies

Unrealistic

The “Deepfake” robot that removes the clothes is in the app itself, on a private channel where users can submit photos. At no cost, therefore, anyone can submit a photo of a person they want to see naked, and the robot will remove the clothes in a few minutes.

The report by Sensity also states that some of the women who have been abused digitally appear to be minors.

The BBC writes that they tested the robot with several different pictures of women, who had agreed that their pictures were used for this, by the British channel.

According to them, the result was poor. None of the pictures looked particularly realistic, and in one of them the navel of one of the women had ended up on her diaphragm.

Claims she was abused in porn movies

Spread online

A similar app was shut down last year, but it is believed that versions of the software are still circulating.

The person who managed that app, and who only went by the nickname “P”, said according to the BBC that he did not see it as something serious.

– I do not care much. It’s just entertaining, and it’s not like there’s any violence involved. No one is going to use this for blackmail, since the result is so unrealistic.

He also said that the team app looked at which photos were shared, and blocked users who shared photos of minors.

Telegram has not responded to inquiries to the BBC.

Shares pedophile content

Between July 2019 and 2020, around 104,853 women received fake nude photos spread online, according to the report to the intelligence company.

Their research also revealed that it could appear that some users used the app solely to create and share pedophile content.

Most users of the app are from Russia and the countries that previously formed the Soviet Union. The app has been blocked in Russia, but the block was removed earlier this year.

These were the details of the news Artificial intelligence: – Sends fake nude photos for this day. We hope that we have succeeded by giving you the full details and information. To follow all our news, you can subscribe to the alerts system or to one of our different systems to provide you with all that is new.

It is also worth noting that the original news has been published and is available at time24.news and the editorial team at AlKhaleej Today has confirmed it and it has been modified, and it may have been completely transferred or quoted from it and you can read and follow this news from its main source.