Thanks to the company’s vertical integration of hardware and software, this is a monumental change that no one but Apple can make anytime soon. When Apple last ventured into such a company in 2006, the company had ditched PowerPC ISA and IBM processors in favor of IBM’s Intel x86 designs. Today, Intel is being abandoned in favor of proprietary processors and CPU microarchitectures based on the Arm ISA.

The new processor is called the Apple M1, the company’s first SoC designed specifically for Macs. With four large power cores, four efficiency cores, and an 8-GPU core GPU, it has 16 billion transistors on a 5-nm process node. Apple is starting a new SoC naming scheme for this new family of processors, but at least on paper it looks a lot like an A14X.

Today’s event had a ton of new official announcements, but it was also missing (in typical Apple fashion) detail. Today we will analyze the new Apple M1 news and perform a micro-architectural deep dive based on the previously released Apple A14 SoC.

The Apple M1 SoC: An A14X for Macs

The new Apple M1 really is the beginning of a new great journey for Apple. During Apple’s presentation, the company didn’t really reveal many details about the design, but there was a slide that told us a lot about the packaging and architecture of the chip:

This packaging style with DRAM in the organic packaging is not new for Apple. They have been using it since the A12. However, it is something that is only used sparingly. When it comes to high-end chips, Apple likes to use this type of packaging instead of your usual smartphone POP (Package on Package) as these chips are designed for higher TDPs. So by holding the DRAM next to the computer chip rather than on top of it, you can ensure that those chips can still be efficiently cooled.

This also means that we are almost certainly looking at a 128-bit DRAM bus on the new chip, similar to that on previous generation AX chips.

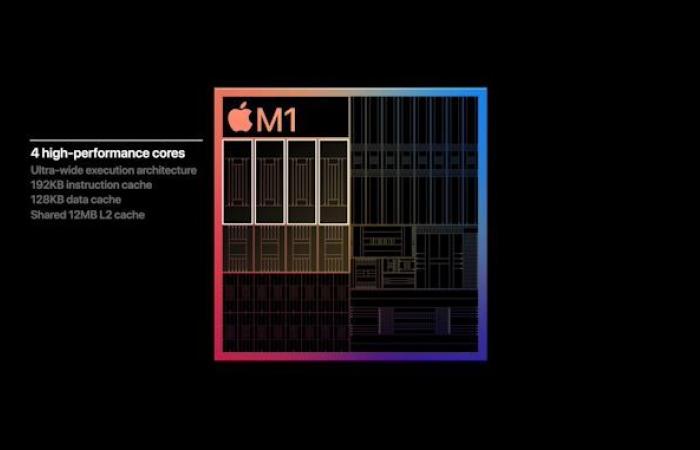

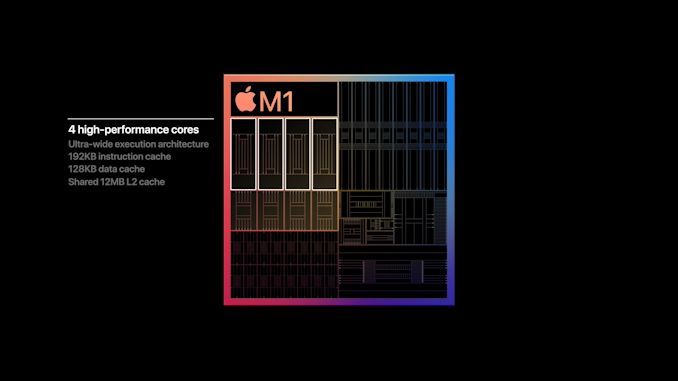

On the same slide, Apple appears to have used an actual die-shot of the new M1 chip as well. It fits perfectly with Apple’s described properties of the chip and looks like a real photo of the stamp. Cue, which is probably the fastest dice note I’ve ever made:

On the left we see the four Firestorm high-performance CPU cores of the M1. Note the large amount of cache – the 12MB cache was one of the surprises of the event as the A14 still only contained 8MB of L2 cache. The new cache here appears to be divided into 3 larger blocks, which makes sense for this new configuration given Apple’s transition from 8MB to 12MB. After all, it is now used by 4 instead of 2 cores.

In the meantime, the 4 Icestorm efficiency cores are located near the center of the SoC. Above this is the cache at the system level of the SoC, which is shared by all IP blocks.

After all, the 8-core GPU takes up a significant part of the chip space and is in the upper part of this chip shot.

The most interesting thing about the M1 is the comparison with other CPU designs from Intel and AMD. All of the aforementioned blocks still only cover part of the entire chip with a significant amount of auxiliary IP. Apple mentioned that the M1 is a true SoC, including the functionality of multiple discrete chips in Mac laptops, like Apple’s I / O controllers and SSDs and security controllers.

According to Apple, the new CPU core is the fastest in the world. This will be a focus of today’s article as we dig deeper into the microarchitecture of the Firestorm cores and look at the performance specs of the very similar Apple A14 SoC.

We assume that the Firestorm cores used in the M1 with their additional cache are even faster than what we are going to dissect with the A14 today. So Apple’s claim to have the fastest CPU core in the world seems extremely plausible.

The entire SoC has 16 billion transistors, 35% more than the A14 in the latest iPhones. If Apple were able to keep the transistor density similar between the two chips, we should expect a chip size of around 120mm². This would be considerably smaller than the last generation Intel chips in Apple’s MacBooks.

Road To Arm: Second verse, like the first

Section by Ryan Smith

The fact that Apple can even make a major architectural transition so seamlessly is a small miracle that Apple has a lot of experience. After all, this isn’t Apple’s first-time switch of CPU architectures for their Mac computers.

The long-standing PowerPC company came to a crossroads in the mid-2000s when the Apple-IBM-Motorola Alliance (AIM), responsible for PowerPC development, increasingly struggled with further chip development. The PowerPC 970 (G5) chip from IBM has performed well in desktops, but the power consumption has been significant. This made the chip suitable for use in the growing laptop segment, where Apple was still using Motorola’s PowerPC 7400 (G4) chips which, while having better power consumption, did not have the performance needed to compete with it what Intel would ultimately achieve with its chip, no longer usable core series of processors.

And so Apple played a card they kept in reserve: Project Marklar. Taking advantage of the flexibility of Mac OS X and the underlying Darwin kernel, which, like other Unix systems, is portable, Apple kept an x86 version of Mac OS X. Although this was initially largely viewed as an exercise in good coding practices – To ensure that Apple writes operating system code that is not unnecessarily tied to PowerPC and its big-endian storage model, Marklar became Apple’s exit strategy from a stagnant PowerPC ecosystem. The company would move to x86 processors – especially Intel’s x86 processors – to improve its software ecosystem, but also to open the door to much better performance and new customer opportunities.

The move to x86 has been a huge win for Apple in every way. Intel’s processors delivered better performance per watt than the PowerPC processors left behind by Apple. In particular, when Intel launched the Core 2 (Conroe) processor series at the end of 2006, Intel firmly established itself as the dominant force for PC processors. This ultimately fueled Apple’s evolution for the years to come, allowing them to become a laptop-centric company with proto-ultrabooks (MacBook Air) and their incredibly popular MacBook Pros. Similarly, x86 brought Windows compatibility with it and introduced the ability to start Windows directly or, alternatively, run it in a virtual machine with very little overhead.

However, the cost of this transition was on the software side. Developers would have to use Apple’s latest toolchains to create universal binaries that could work on PPC and x86 Macs – and not all of Apple’s previous APIs would make the leap to x86. The developers made the leap, of course, but it was a transition with no real precedent.

For a short time at least, Rosetta, Apple’s PowerPC translation layer for x86, bridged the gap. Rosetta would allow most PPC Mac OS X applications to run on the x86 Macs, and while performance was a bit poor (PPC on x86 isn’t the easiest thing to do), the higher performance Intel CPUs contributed to at transporting things for most non-intensive uses. Ultimately, Rosetta was a patch on Apple, and an Apple was ripped off relatively quickly. Apple discontinued Rosetta at the time of Mac OS X 10.7 (Lion) in 2011. Even with Rosetta, Apple made it clear to developers that if they wanted to keep selling them and keeping users happy, they should upgrade their applications to x86.

Ultimately, the transitions from PowerPC to x86 set the tone for the modern, agile Apple. Since then, Apple has developed a whole development philosophy aimed at acting fast and changing things at its own discretion, with limited backward compatibility. This has left few opportunities for users and developers to enjoy the ride and keep up with Apple’s development trends. However, Apple has also been given the ability to adopt new technology early and, if necessary, break old applications so that new features are not affected by backward compatibility issues.

All of this has happened before and will happen again starting next week when Apple launches its first Apple M1-based Macs. Universal binaries are back, Rosetta is back, and Apple’s drive for developers to get their applications up and running is in full swing. The transition from PPC to x86 created the template for Apple for an ISA change. After this successful transition, they will do so again in the next few years as Apple becomes their own chip supplier.

Ein mikroarchitektonischer Deep Dive & Benchmarks

On the following page, we’ll examine the A14’s Firestorm cores, which are also used in the M1, and run some extensive benchmarking tests on the iPhone chip, setting the stage as the minimum of what to expect from the M1:

These were the details of the news Apple announces the Apple Silicon M1: Ditching x86 for this day. We hope that we have succeeded by giving you the full details and information. To follow all our news, you can subscribe to the alerts system or to one of our different systems to provide you with all that is new.

It is also worth noting that the original news has been published and is available at de24.news and the editorial team at AlKhaleej Today has confirmed it and it has been modified, and it may have been completely transferred or quoted from it and you can read and follow this news from its main source.