7. November 2020 by Johnna Crider

In a video from YouTuber Dr. Know-it-all knows everythingThe good doctor shared his thoughts on Tesla’s Beta for Full Self-Driving (FSD) and why it’s such a big deal. Yes, we all know how revolutionary the technology behind FSD is, but do we really know? The doctor jumped into the trajectory prediction and the 4D data continuity. He explained the reason why Andrej Karpathy and Elon Musk “kept talking” about the importance of Tesla’s new 4D training and processing.

“The two biggest elements are data continuity and trajectory projection,” he explained. “Together, these two cause a massive leap forward and open a path to true level 4 autonomy.” He began by giving a little of his background on his master’s thesis, Image-based content retrieval via class-based histogram comparisonsfor the Institute of Artificial Intelligence at the University of Georgia, Athens.

He described the image-based system as a quasi-semantic method to identify objects via a neural network and then to find the other object in the image database. “Semantically, if a computer recognizes a cat, it doesn’t mean that it understands that it is a cat. It just means that this arrangement of edges, pixels, and colors, etc. is tied to a label that is known to be called “cat”. And that’s how it does it. ”

He said that since it is not understood the way we do it as humans, he calls it quasi-semantic. People learn Things along with the language. A particular splash of color is mom or dad. Another blob could be your hand. “What we consider to be such basic knowledge is really difficult for humans to understand,” he emphasized, adding that evolution helps us understand it. “It’s also very difficult for computers to understand. So this is still some kind of cutting edge research. ”

How this affects driving

The problem is that knowing that an image contains something related to a semantic name – a dog, for example – doesn’t give the computer any further information about what a dog actually is. There is no insight into a dog’s behavior. In the video, he compared a black Labrador Retriever to a garbage bag – they could look similar on a computer.

In order to drive effectively with level 4 and 5 autonomy, the computer must know the difference between them. He also pointed out that if the computer could identify a dog in one picture, traditionally it didn’t really help in figuring out what was in the next picture. “That is continuity,” he remarked, then added, “If I know what something is in one picture, I should be able to know that it is in the next picture and the next picture – in other words, four-dimensional in the course currently.” . ”That’s why they always talk about“ 4D ”. Time or continuity is the 4th dimension.

Dog example: Google search vs. Video sequence processing

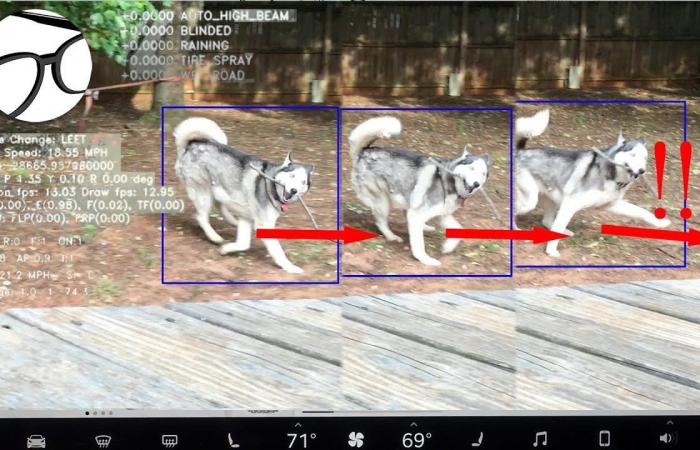

The doctor used a comparison of a Google search of a dog with video sequencing to point out the main differences. When you do a picture search on a dog, the computer brings up thousands of pictures of different dogs in different situations. The search engine can figure out what those pixels and shapes indicate and just needs to find images that match that particular label. In video sequence processing, the computer must process every frame. Doc showed video stills of a dog in a blue box, frame by frame.

In the first picture the dog is standing with a branch in its mouth. In the next picture the dog has moved, but is still in the same position as in the first picture. However, picture three showed the dog in a completely different pose, and he pointed out that the computer may have a bigger problem identifying him as a dog. “This is very inefficient because it has to reprocess everything every single frame and is prone to error every frame as it may just decide that none of the frames is a dog,” he said. All of a sudden you have dog, dog, dog, dog, not dog, not dog, dog, dog – resulting in a bug that causes problems with driving skills.

Current technology

The doctor indicated that doing so would lead to very rudimentary driving skills. “It’s like having an early student driver in your car. You are very careful. You make stupid mistakes. It hugs the middle of the alley. When it gets confused. It slows down in the middle of the road for no reason – just phantom brakes, etc. ”He noted that if we really want to have the sci-fi self-driving that we expect, with the car driving itself, these problem areas need to be resolved. To do this, we need “continuity of data over a long sequence of images or videos and a temporary semantic understanding of what the car is seeing”. This is where 4D training comes in.

He noticed that Tesla’s FSD was looking at eight individual cameras along with radar and sonar every thirty seconds or so. This is very quick processing. “All of this information was processed separately. It identified the objects and then acted on them, ”he said, adding that for the next picture it threw away all that information and started over.

What does the new FSD beta with 4D functions offer us?

As Elon previously stated – and the doctor in his videos too – this is a complete, fundamental rewrite of the software with new models, new neural network models, and new algorithms. It uses the Tesla inference engine – the chips that Tesla has in its vehicles. All eight cameras are now linked in one view, and he found that it was a video sequence rather than individual images. In addition, there is object recognition that contains more information.

Going back to the dog example, the doctor pointed out that in this case, the computer can intelligently find out if Frame 2 is a dog or not by wondering if the dog has moved a little. Coupled with the likelihood of fewer errors in identifying this dog over time, the good doctor said, “Obviously, this data continuity over time is exactly what the 4D, Musk and Karpathy are talking about. This 4D training opens the door to trajectory projection. “This would require the computer to have a lot of knowledge as it needs to understand what these objects are.

Back to the Dog – In the first frame, the computer processes and understands that this is a dog, an object that can move on its own in certain possible ways. In picture 2 the dog is still there, but it has moved and changed its position. In the third picture, the computer recognizes that the dog is taking a step forward and forward to the right in the car, and the computer can understand that this object can move quickly and overlap what the doctor calls the “ego car”. This is a name for the car that contains the computer. “The computer now knows that it must take immediate action to avoid this object, which can move by itself, or at least has to brake quickly to avoid a collision with the object.”

In the case of a garbage bag, the computer would treat this differently than the dog because it has semantic knowledge of what these two objects are. The computer would know that a trash bag was an object that didn’t move on its own, and if it was in the way it would use other calculations to avoid being hit.

“This type of trajectory projection is what humans are very good at – at least if we are careful while driving. Distracted driving is a whole different matter, but computers really haven’t been able to do it so far, which is why they’re really not very good at driving themselves. For this reason, there are companies like Waymo and GM Cruise etc. who can use LiDAR to map precise environments around them and track these things. “He compared such systems to following the trail on a roller coaster. They can detect objects in the way and brake if necessary, but they have no understanding of the new FSD software from Tesla.

Not only are there a multitude of objects in the world that can get in the car’s path, but the computer must also find out if these objects are moving, stay still, how dangerous they are, and then react when, for example a tree trunk falls from the back of a truck. In this context, the computer must also determine whether or not things are far enough away – and if not, how it will react. A good example of this is that I am crossing the zebra crossing. If the driver is not careful and accidentally accelerates instead of brakes, they could hit me – this has happened several times. With FSD, the computer would notice that the light is red and the car would likely stop at some point before I hit the zebra crossing.

The video that Doctor know-it-all knows everything shared was very informative and offered a little insight into this world of FSD, neural networks and artificial intelligence. You can watch the full video here.

Do you value the originality of CleanTechnica? Consider becoming a CleanTechnica member, supporter, or ambassador – or a patron on Patreon.

Sign up for our free daily newsletter or weekly newsletter to never miss a story.

Do you have a tip for CleanTechnica, would you like to advertise or suggest a guest for our CleanTech Talk podcast? Contact us here.

Latest cleantech talk episodes

Keywords: Tesla, Tesla autopilot, Tesla fully self-driving

About the author

Johnna Crider is a Baton Rouge artist, gem and mineral collector, member of the International Gem Society, and a Tesla shareholder who believes in Elon Musk and Tesla. Elon Musk advised her in 2018 to believe in the good. Tesla is one of many good things to believe in. You can find Johnna on Twitter

These were the details of the news 4D data continuity and trajectory projection | DE24 News for this day. We hope that we have succeeded by giving you the full details and information. To follow all our news, you can subscribe to the alerts system or to one of our different systems to provide you with all that is new.

It is also worth noting that the original news has been published and is available at de24.news and the editorial team at AlKhaleej Today has confirmed it and it has been modified, and it may have been completely transferred or quoted from it and you can read and follow this news from its main source.